An attorney has issued a warning over an elaborate AI voice-cloning scam which nearly fooled his dad into handing over $35,000.

You may have heard about election interference scams that use AI to recreate a candidate’s voice to spread disinformation about voting—but that same artificial intelligence is being used to create panic in family members over the phone.

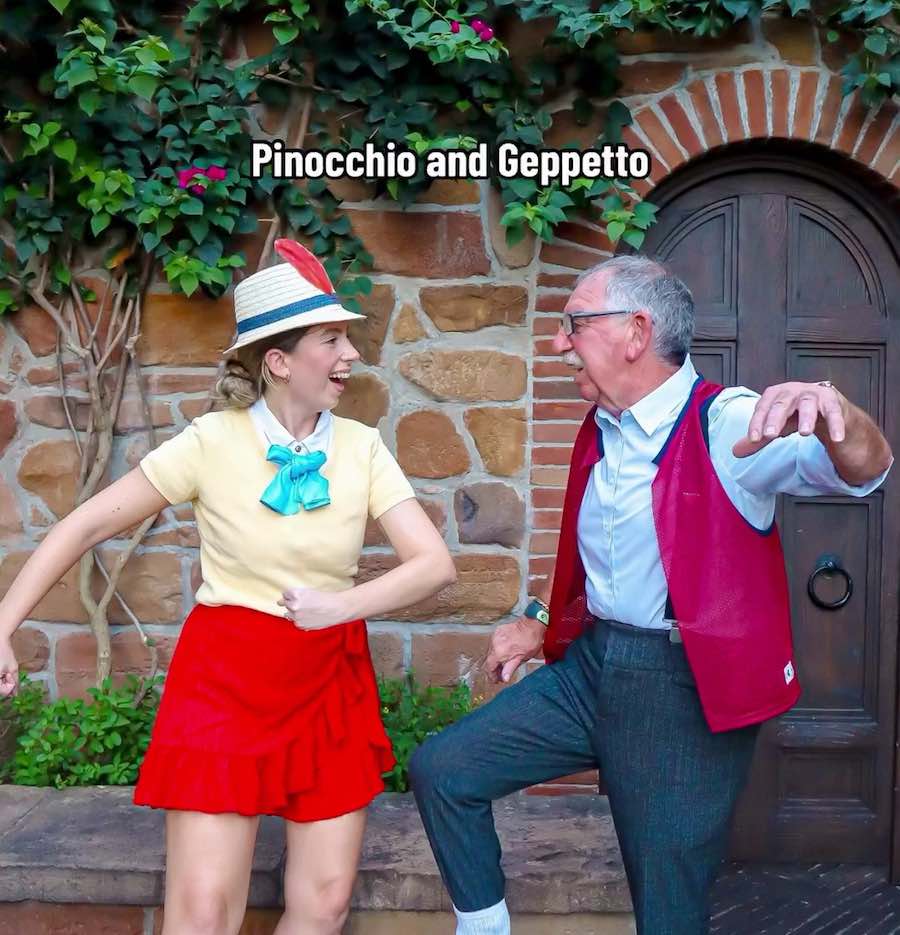

Scammers impersonating Jay Shooster called his dad Frank, convincing him that his son had been in a serious car accident, was arrested, and needed bail money.

Even though he’s an educated retired attorney, the terrified 70-year-old had no idea it was not his son.

“I got a phone call and it was my son, Jay. He was hysterical, but I knew his voice immediately.

“He said he had been in an accident, broke his nose, had 16 stitches, and was in police custody because he tested positive for alcohol after a breathalyzer, blaming it on the cough syrup he had taken earlier.”

His 34-year-old son, who is campaigning for Congress in Florida’s 91st District in Boca Raton, believes the scammers managed to create the fake voice from his 15-second TV campaign ad.

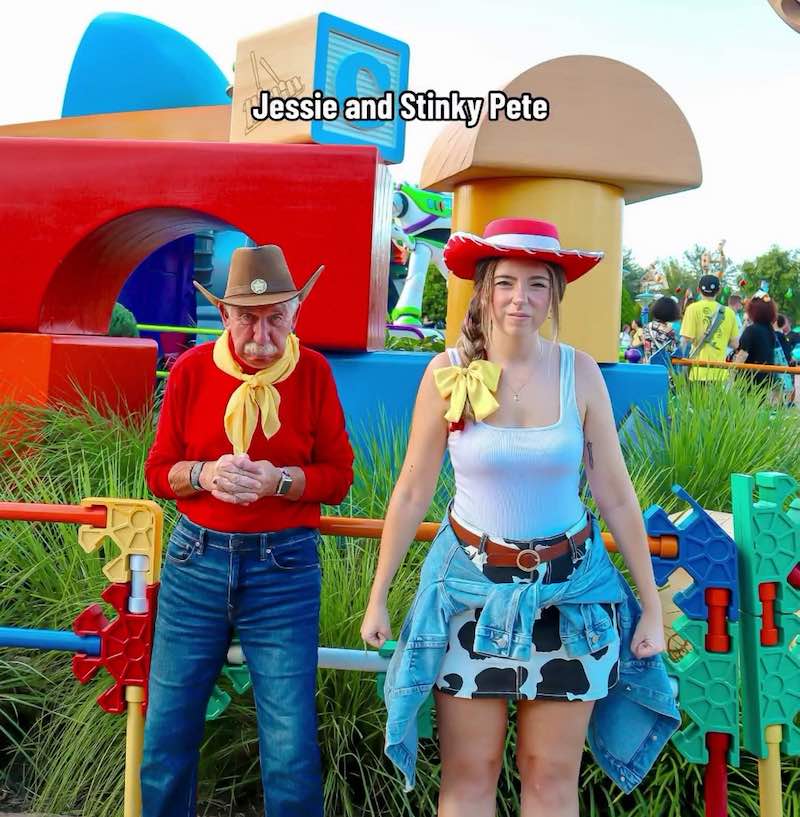

On September 28, the impersonator pleaded with Frank not to tell anyone about the situation. Moments later, another man identifying himself as attorney ‘Mike Rivers’, said Jay needed a $35,000 cash bond to avoid being held in jail for several days.

The scam escalated when ‘Rivers’ instructed Frank to pay the bond via a cryptocurrency machine—an unconventional request that heightened Frank’s suspicions.

“I became suspicious when he told me to go to a Coinbase machine at Winn-Dixie,” Frank says. “I didn’t understand how that was part of the legal process.”

He eventually realized something was not right after his daughter, who Frank was visiting, told him that AI voice-cloning scams were on the rise—and hung up the phone.

“It’s devastating to get that kind of call,” said Frank. “My son has worked so hard, and I was beside myself, thinking his career and campaign could be in ruins.”

LEARN MORE: Hero Bank Teller Saves Customer From Losing Millions on a Scam–by Asking a Few Simple Questions

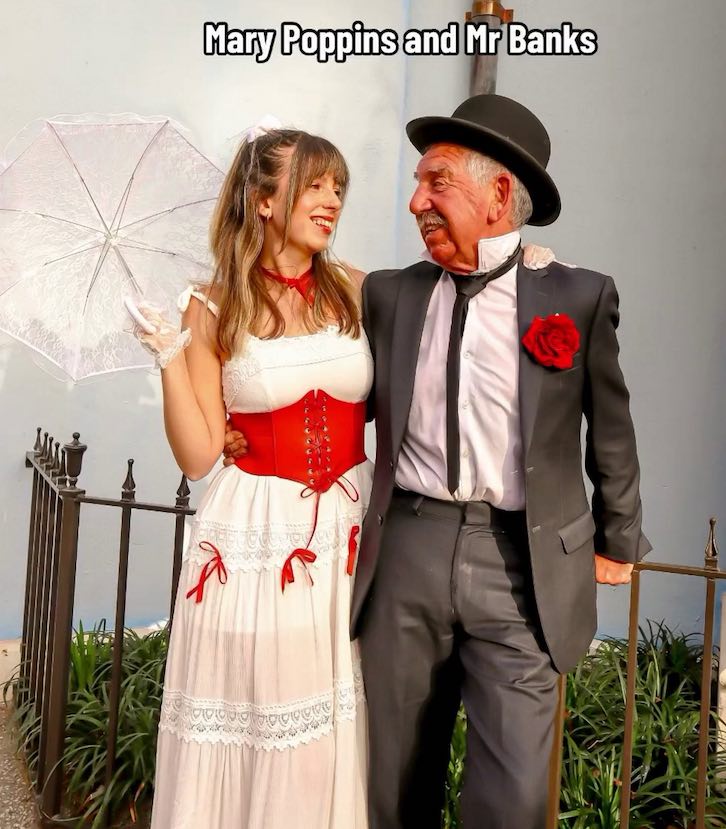

Jay, who often goes to court on consumer fraud cases as an attorney, was shocked to find himself a target.

“I’ve been paying attention to AI and its effects on consumers, but nothing prepares you for when it happens to you,” Jay says.

“They did their research. They didn’t use my phone number, which fit the story that I was in jail without access to my phone.”

The scam’s sophistication left Jay stunned, saying, “All it takes is a few seconds of someone’s voice—the technology is so advanced that they could have easily pulled my voice from my 15-second campaign ad.”

Jay is advocating for changes in AI regulation to prevent such scams from harming others.

ANOTHER SCAM REVEALED: Hero Post Office Worker Saves Senior From Sending Thousands of Dollars to a Scammer

“There are three key policy solutions we need,” he says. “First, AI companies must be held accountable if their products are misused.

“Second, companies should require authentication before cloning anyone’s voice. And third, AI-generated content should be watermarked, so it’s easily detectable, whether it’s a cloned voice or a fake video.”

If elected to the Florida House of Representatives, Jay plans to take action against the rising misuse of AI technology, including voice-cloning scams.

He aims to introduce legislation that would hold AI companies liable for misuse, ensuring they implement necessary safeguards such as voice authentication and watermarking.

“We need to create clear regulations to stop these types of crimes from happening,” Jay says. “It’s not just about technology — it’s about protecting people from the trauma and financial damage that can result from these scams.

AMAZING OUTCOMES:

• Bank Decides it Should have Prevented Dementia Depositor from Getting Scammed–So Restores Entire Life Savings

• Woman Meets Her Internet Scammer, Helps Him Give Up Crime, and Pays for His College

“I want to push for more stringent requirements for AI developers to ensure their tools are not used maliciously.”

As AI technology rapidly evolves, Jay and Frank hope their story serves as a warning for others to stay vigilant.

“This shows how important it is to stay calm and think things through carefully,” Frank notes. “You have to listen and ask questions if something doesn’t add up. Scams like this are becoming more sophisticated, but we can’t let our guard down.”

PLEASE SHARE WITH FRIENDS And Family So They Can Avoid Getting Scammed…